I needed to create a small OKD/OpenShift setup so I could play around with it and get comfortable with using it, developing on it and administering it. Additionally I wanted it to be somewhat similar in setup to what we do at work.

This was originally based on the excellent post by Craig Robinson, but there has been a very significant amount of research and extra sources of info, investigation and research since.

OKD 4.5 Single Node Cluster on Windows 10 using Hyper-V

https://laptrinhx.com/okd-4-5-single-node-cluster-on-windows-10-using-hyper-v-3721419958/

Note that is this the single node cluster setup rather than the popular multi-node cluster guide https://itnext.io/guide-installing-an-okd-4-5-cluster-508a2631cbee

Overview

This guide is really documentation on what I did to setup my small lab environment. A big part of the point of it is for me to be able to document what I did so if I have to repeat stuff, as often seems to be the case for me, then I can do so. As such this is not a dummies guide.

This document does not describe how to use OKD, nor how to create projects or do builds. It is purely about getting a working environment

My environment

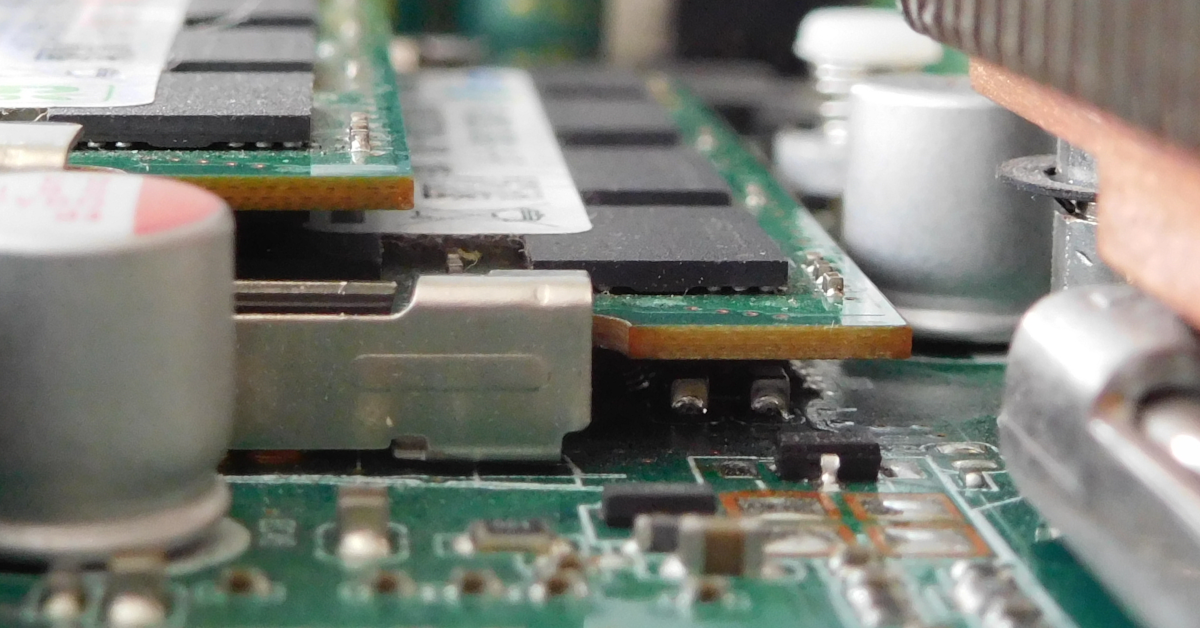

I am doing the full install on an HP Compaq Elite 8300 SFF:

- ESXi 7.0.0

- Core i5-3470 CPU @ 3.20GHz – 4 cores no hyper threading support

- 16GB memory

- Boot ESX from USB flash drive

- 500 GB Samsung SSD 860 (Also a 4TB Seagate non-SSD for backups)

I am using a Windows 10 desktop with my Firefox as my primary browser.

Additionally I operate a SonicWall TZ 215 which takes the VLAN and makes it a sub-interface to the LAN.

I am looking to have a separate ESX box to run an extra worker node, partly to add extra capacity but also partly to understand how it will work with mulitple worker nodes.

Installing on ESXi

Craig’s guide is for installing on Hyper-V on Windows but I needed to install on ESX because it brought multiple advantages.

I have setup my local environment using it’s own VLAN, 20, which I have called “OKD VLAN20”. This is configured on the ESX hosts and also on my Sonicwall firewall. This VLAN uses the range 192.168.205.x and is a “VLAN Sub-Interface” to my home lan of 192.168.202.x.

OKD hosts

All my hosts are on the VLAN “OKD VLAN20”

| Host | HDD | vCPU | RAM | IP |

| okd4-services | 200GB | 2 | 8GB | 192.168.205.150 |

| okd4-control-plane-1 | 120GB | 4 | 16GB | 192.168.205.151 |

| okd4-bootstrap | 25GB | 4 | 16GB | 192.168.205.152 |

| okd4-compute-1 | 120 GB | 4 | 16GB | 192.168.205.153 |

You will have noticed that the vCPU and RAM far exceed that on my ESX server. ESX allows you to over subscribe memory and vcpu and by configuring the specified memory and vcpu it means OKD sees the specified resources. Also the resources are not consumed at once so the reality is it works!

The services host, “okd4-services”, has a large disk, currently 200GB, this is primarily because we put our persistent volumes on this host. It just makes things a lot easier in a dev environment, it means for example we can just backup the VMs and you have everything!

Your skills

This guide is really documentation on what I did to setup this small lab environment. A big part of the point of it is for me to be able to document what I did so if I have to repeat stuff, as often seems to be the case for me, then I can do so.

As such this is not a dummies guide. I assume you know your way around ESX and it’s web console and equally know your way around unix.

Config files

Craig has kindly provided customized config file for a number of things and these can be found at https://github.com/cragr/okd4-snc-hyperv I have taken these as my starting place and tweaked for my environment:

Note: If you do edit this file ensure it has Unix EOL characters, which is just LF. Depending on how you copy the files around you sFTP client may do smarts for you and change the EOL characters – for me I always disable this and transfer all files as binary

Local DHCP and DNS

For this setup we have a host “okd4-services” this contains a number of infrastructure components:

- DNS

- HAProxy – Load balancer/proxy

- Apache – Web interface

- DHCP

- NFS

This means that each of the VMs in this setup is configured by DHCP and is based on the MAC for that VM

Use Fedora 32

Craig’s guide uses Fedora 32 and at the time of writing Fedora 33 was out so I tried to use this. It didn’t work out too well so I am using Fedora 32. One of the important things that changed in Fedora 33 was DNS so rather than try to figure it out I went for v32

OKD 4.5 rather than 4.6

I was keen to setup 4.6 but it seems to be very problematic. As mentioned above Fedora 33 has issues and it appears 4.6 has a few install challenges – note that OKD 4.6 uses Fedora CoreOS 33 bare-metal bios images. Additionally there are virtually no OKD 4.6 install guides, some for OpenShift. My motivation for this setup is to get familiar with OKD so I have taken the pragmatic decision to do the clean install with 4.5.

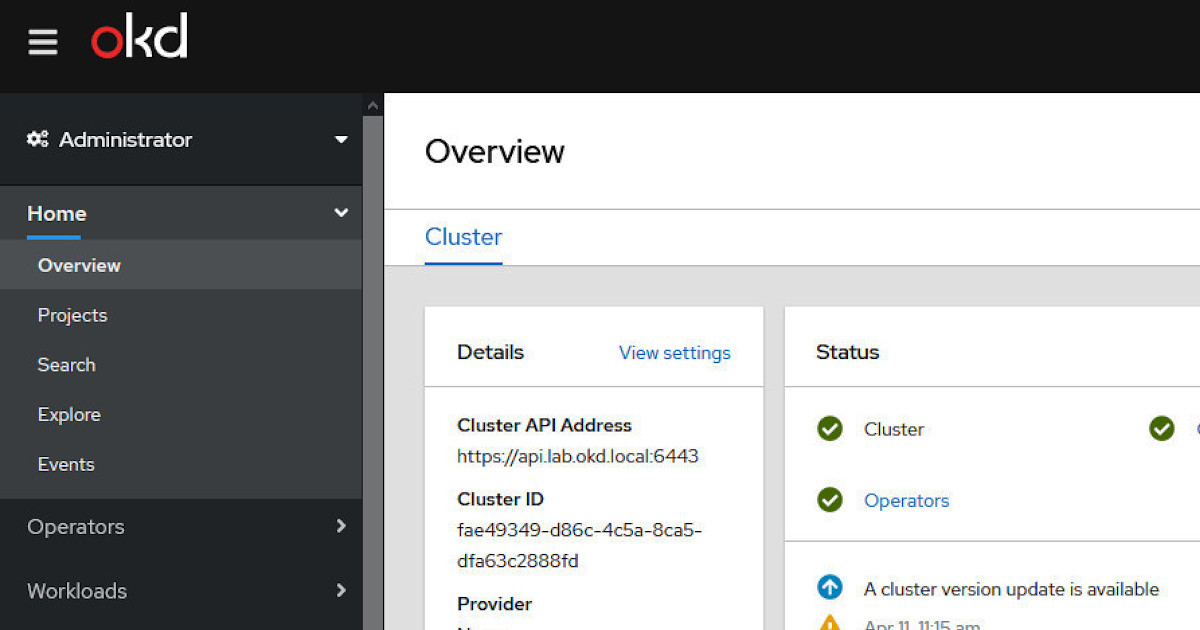

Connection Details

oc server: https://api.lab.okd.local:6443

This is for running oc commands for example:

oc login https://api.lab.okd.local:6443OKD Web Console: https://console-openshift-console.apps.lab.okd.local/

Services host

This host must be created first as it setups DNS and more importantly DHCP which the other servers rely on.

For partitioning note that in our case the services host will also contain the registry which we have defined as 80GB, this is part of the reason for giving the services host a 120GB drive. Additionally please ensure the partitions on the services host have the root partition, “/”, last – this will make it a straightforward exercise to expand, online, if required.

Create a VM with the specs from the table at the top of this post and set the network adapter to use “OKD VLAN20”.

I am using the “Fedora-Server-dvd-x86_64-32-1.6” ISO rather than the Netinstall iso. So in my case it was “Fedora-Server-dvd-x86_64-32-1.6.iso”

Below are my configs

Its a good idea to set the actual, final, IP and search domains as above.

Regards partitioning I prefer standard partition scheme, LVM is great, but for me for test labs it’s too much overhead. I have given it a 200GB drive and partitioned as below, note this is an old screenshot and shows a 30GB partition rather than the 200GB I use now:

As mentioned earlier, ensure the root partition “/” is the last partition

If you have issues with partitioning you can see what I did for CentOS 7 base VM for WordPress and MythTV

Once the server, okd4-services, is rebooted and working ssh to it as root.

Update firewall

There are various places in this post where we update the firewall. I have consolidated these all into one. So run the following:

firewall-cmd --permanent --zone=public --add-service mountd

firewall-cmd --permanent --zone=public --add-service rpc-bind

firewall-cmd --permanent --zone=public --add-service nfs

firewall-cmd --permanent --add-service=dhcp

firewall-cmd --permanent --add-port=53/udp

firewall-cmd --permanent --add-port=53/tcp

firewall-cmd --permanent --add-port=6443/tcp

firewall-cmd --permanent --add-port=22623/tcp

firewall-cmd --permanent --add-service=http

firewall-cmd --permanent --add-service=https

firewall-cmd --permanent --add-port=8080/tcp

firewall-cmd --reloadInstall DHCP and DNS (bind/named)

Take the config zip file mentioned earlier and put the contents of it in “/opt/okd4/configs/” on okd4-services. Then run the following to enable DHCP and use the defaults from the config file

cd /opt/okd4/configs/

dnf -y install dhcp-server

mv /etc/dhcp/dhcpd.conf /etc/dhcp/dhcpd.conf.ootb

cp dhcpd.conf /etc/dhcp/

systemctl enable --now dhcpd

systemctl start dhcpdNow you need to install bind and do the named config. So run the following:

dnf -y install bind bind-utils

mv /etc/named.conf /etc/named.ootb

cp named.conf /etc/named.conf

cp named.conf.local /etc/named/

mkdir /etc/named/zones

cp db* /etc/named/zones

systemctl enable named

systemctl start named

systemctl status namedFirst you need to confirm the connection or interface name, so run “ifconfig” which should return something like:

[root@localhost okd4_configs]# ifconfig

ens192: flags=4163 mtu 1500

inet 192.168.205.150 netmask 255.255.255.0 broadcast 192.168.205.255

inet6 fe80::20c:29ff:fef3:db98 prefixlen 64 scopeid 0x20

ether 00:0c:29:f3:db:98 txqueuelen 1000 (Ethernet)

RX packets 77721 bytes 113390994 (108.1 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 39431 bytes 2897489 (2.7 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73 mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

inet6 ::1 prefixlen 128 scopeid 0x10

loop txqueuelen 1000 (Local Loopback)

RX packets 36 bytes 2390 (2.3 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 36 bytes 2390 (2.3 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0From the above we can see the ethernet connection is called “ens192”, yours may be different, so now change the DNS setting on okd4-services and restart the network manager:

nmcli connection modify ens192 ipv4.dns "127.0.0.1" systemctl restart NetworkManager

Now we need to test so run “dig okd.local” and you should see:

[root@okd4-services okd4_configs]# dig okd.local

; <<>> DiG 9.11.25-RedHat-9.11.25-2.fc32 <<>> okd.local

;; global options: +cmd

;; Got answer:

;; WARNING: .local is reserved for Multicast DNS

;; You are currently testing what happens when an mDNS query is leaked to DNS

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 49314

;; flags: qr aa rd ra; QUERY: 1, ANSWER: 0, AUTHORITY: 1, ADDITIONAL: 1

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 1232

; COOKIE: 0c5ef1f6a9151608324d1f975ff15bc20e748313242ced2a (good)

;; QUESTION SECTION:

;okd.local. IN A

;; AUTHORITY SECTION:

okd.local. 604800 IN SOA okd4-services.okd.local. admin.okd.local. 1 604800 86400 2419200 604800

;; Query time: 0 msec

;; SERVER: 127.0.0.1#53(127.0.0.1)

;; WHEN: Sun Jan 03 00:53:06 EST 2021

;; MSG SIZE rcvd: 122Then run “dig -x 192.168.205.150”:

[root@okd4-services okd4_configs]# dig -x 192.168.205.150

; <<>> DiG 9.11.25-RedHat-9.11.25-2.fc32 <<>> -x 192.168.205.150

;; global options: +cmd

;; Got answer:

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 19352

;; flags: qr aa rd ra; QUERY: 1, ANSWER: 3, AUTHORITY: 1, ADDITIONAL: 2

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 1232

; COOKIE: 7103218c8a5468f004a0fe055ff15c02fab4c4838affe15b (good)

;; QUESTION SECTION:

;150.205.168.192.in-addr.arpa. IN PTR

;; ANSWER SECTION:

150.205.168.192.in-addr.arpa. 604800 IN PTR api-int.lab.okd.local.

150.205.168.192.in-addr.arpa. 604800 IN PTR api.lab.okd.local.

150.205.168.192.in-addr.arpa. 604800 IN PTR okd4-services.okd.local.

;; AUTHORITY SECTION:

205.168.192.in-addr.arpa. 604800 IN NS okd4-services.okd.local.

;; ADDITIONAL SECTION:

okd4-services.okd.local. 604800 IN A 192.168.205.150

;; Query time: 0 msec

;; SERVER: 127.0.0.1#53(127.0.0.1)

;; WHEN: Sun Jan 03 00:54:10 EST 2021

;; MSG SIZE rcvd: 196Install HAProxy and Apache

Still logged in as root and still in “/opt/okd4/configs/” run the following to install and setup HAProxy:

dnf install haproxy -y

mv /etc/haproxy/haproxy.cfg /etc/haproxy/haproxy.cfg.ootb

cp haproxy.cfg /etc/haproxy/haproxy.cfg

setsebool -P haproxy_connect_any 1

systemctl enable haproxy

systemctl start haproxy

systemctl status haproxyInstall Apache:

dnf install -y httpd

sed -i 's/Listen 80/Listen 8080/' /etc/httpd/conf/httpd.conf

setsebool -P httpd_read_user_content 1

systemctl enable httpd

systemctl start httpdYou can now test Apache is working by either going to http://192.168.205.150:8080/ from a browser or running curl on okd4-services:

curl localhost:8080

Enable NFS

We need to have persistent storage available for things like the registry. So run the following:

systemctl enable nfs-server rpcbind

systemctl start nfs-server rpcbind

mkdir /srv/nfsshares

chmod -R 2777 /srv/nfsshares

chown -R nobody:nobody /srv/nfssharesBackup and then edit “/etc/exports” and add the following line:

/srv/nfsshares 192.168.0.0/16(rw,sync,no_root_squash,no_all_squash,no_wdelay)Run the following to enable the share

setsebool -P nfs_export_all_rw 1

systemctl restart nfs-serverThe NFS share should now be available. To use this in Windows, at least to test it is working, you need to add “Client for NFS” from “Turn Windows features on or off” in the Control Panel:

Once you have done the above you should be able to mount, as “T:”, our NFS share by running the following in a Windows cmd prompt:

mount \\192.168.205.150\srv\nfsshares t:Misc

Add any misc stuff:

yum -y install jqTime date and NTP

This is from Red Hat:

Entropy

OpenShift Container Platform uses entropy to generate random numbers for objects such as IDs or SSL traffic. These operations wait until there is enough entropy to complete the task. Without enough entropy, the kernel is not able to generate these random numbers with sufficient speed, which can lead to timeouts and the refusal of secure connections.

It would appear that OpenShift uses UTP and any other timezone is currently unsupported, as at 10/3/2021. There is an open ticket to Ability to change the timezone of CoresOS nodes

If you do want to set the correct timezone. begin by listing the available timezones using:

timedatectl list-timezonesFor me I live in New Zealand so my timezone is “Pacific/Auckland”. I set this by running:

timedatectl set-timezone Pacific/AucklandObviously if you were using UTC you would run:

timedatectl set-timezone UTCEnsure NTP server is running. Chrony is the replacement for NTPd by running:

systemctl status chronyd.serviceEnsure the port has been opened in the firewall by adding to the firewall rules:

firewall-cmd --permanent --zone=public --add-port=123/udpRestart the firewall and list the rules by running “firewall-cmd –list-all” and you should see:

[root@okd4-services ~]# firewall-cmd --list-all

public (active)

target: default

icmp-block-inversion: no

interfaces: ens192

sources:

services: dhcp dhcpv6-client http https mdns mountd nfs rpc-bind ssh

ports: 53/udp 6443/tcp 22623/tcp 8080/tcp 53/tcp 123/udp

protocols:

masquerade: no

forward-ports:

source-ports:

icmp-blocks:

rich rules:Note above the entry “123/udp” which is the NTP port

Backup the chronyd settings, “/etc/chrony.conf” and replace with the below:

#

# Example chrony file from zoyinc.com

#

# Using New Zealand NPT servers - Please set to your local NTP public servers

#server 43.252.70.34

server 0.pool.ntp.org

server 1.pool.ntp.org

server 2.pool.ntp.org

server 3.pool.ntp.org

server 216.239.35.0

server 216.239.35.4

# Record the rate at which the system clock gains/losses time.

driftfile /var/lib/chrony/drift

# Allow the system clock to be stepped in the first three updates

# if its offset is larger than 1 second.

makestep 1.0 3

# Enable kernel synchronization of the real-time clock (RTC).

rtcsync

# Allow NTP client access from local network.

allow 192.168.0.0/16

# Serve time even if not synchronized to a time source.

local stratum 10

# Specify directory for log files.

logdir /var/log/chrony

# Select which information is logged.

log measurements statistics trackingYou will notice the bottom two “server” entries are static IPs and are for Google NTP servers – these IPs appear to be stable.

To check the current date time settings run “timedatectl” which initially for me returned:

[root@okd4-services ~]# timedatectl

Local time: Mon 2021-02-01 16:41:37 NZDT

Universal time: Mon 2021-02-01 03:41:37 UTC

RTC time: Mon 2021-02-01 03:41:37

Time zone: Pacific/Auckland (NZDT, +1300)

System clock synchronized: no

NTP service: active

RTC in local TZ: noNote in this case it returned “System clock synchronized: no”.

Restart chrony by running:

systemctl restart chronyd.service

Once it is working it should return something like:

[root@okd4-services ~]# timedatectl

Local time: Mon 2021-03-08 19:27:35 NZDT

Universal time: Mon 2021-03-08 06:27:35 UTC

RTC time: Sun 2021-04-11 06:27:15

Time zone: Pacific/Auckland (NZDT, +1300)

System clock synchronized: yes

NTP service: active

RTC in local TZ: noYou ca also run “chronyc sources” which returns the following when NTP is working:

[root@okd4-services ~]# chronyc sources

210 Number of sources = 5

MS Name/IP address Stratum Poll Reach LastRx Last sample

^- time1.google.com 1 6 377 28 -2384us[-1600us] +/- 82ms

^- 101-100-146-146.myrepubl> 2 6 37 27 -1500us[ -715us] +/- 42ms

^- joplin.convolute.net.nz 2 6 37 28 +13ms[ +14ms] +/- 57ms

^* ntp1.ntp.net.nz 1 6 37 28 +509us[+1293us] +/- 3074us

^- ns1.tdc.akl.telesmart.co> 2 6 37 28 +296us[ +296us] +/- 6512usYou can also tail the log:

[root@okd4-services ~]# tail -f /var/log/chrony/tracking.log

2021-03-08 06:23:34 202.46.177.18 2 -11.069 0.250 -9.233e-05 N 1 3.640e-04 -3.327e-07 7.036e-03 7.558e-04 6.876e-03

2021-03-08 06:24:39 202.46.177.18 2 -11.070 0.291 7.842e-04 N 1 5.256e-04 7.645e-05 5.351e-03 9.281e-04 5.011e-03

2021-03-08 06:25:43 202.46.177.18 2 -11.072 0.356 -1.868e-05 N 2 4.066e-04 -7.539e-05 6.117e-03 8.378e-04 4.582e-03

2021-03-08 06:26:48 202.46.177.18 2 -11.072 0.410 7.990e-05 N 2 5.149e-04 -5.144e-05 6.422e-03 7.999e-04 4.090e-03Possibly backup services node

At this point I have a habit of backing up the services node because this point is prior to installing OpenShift and so if I need to redo the whole OKD part then this is a nice, clean, point to do it.

OpenShift client and server

You need to download oc client and openshift-install files. These can be found at: https://github.com/openshift/okd/releases

You will need to scroll down the page quite a bit to find the “openshift-client-linux” and “openshift-install-linux” archives:

Put these two files on okd4-services in /opt/okd4/misc/ and then extract them, move them to /usr/local/bin and finally check versions by running:

mkdir /opt/okd4/misc

cd /opt/okd4/misc/

tar -zxvf openshift-client-linux-4.5.0-0.okd-2020-10-15-235428.tar.gz

tar -zxvf openshift-install-linux-4.5.0-0.okd-2020-10-15-235428.tar.gz

mv kubectl oc openshift-install /usr/local/bin/

cd /

oc version

openshift-install versionGet Red Hat pull secret

First time I read about this I thought it was kind of optional because Craig’s guide said:

In the install-config.yaml, you can either use a pull-secret from RedHat or the default of “{“auths”:{“fake”:{“auth”: “bar”}}}” as the pull-secret.

However, I discovered the default doesn’t work now, because when the control-plane node comes up it will use the pull secret to login to the Red Hat OpenShift Cluster Manager site to download sample images. It further seems that the “fake” login doesn’t work now.

It seems that by default OKD will download the sample images to speed up things and if it can access the images repo but not login then the control-plane will continually try to download and will never be up. At least that is my experience.

You will need to create a Red Hat account to login, which is free, then go to https://cloud.redhat.com/openshift/install/pull-secret to get your pull secret – this is a json string.

Openshift installer setup

Firstly you need an ssh key, so run “ssh-keygen” from any folder and accept the default filename of “/root/.ssh/id_rsa”. For myself I have a blank passphrase.

The content of “/root/.ssh/id_rsa.pub” should look something like:

ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABgQD3U0ysRouHNtCMtzx4qeqny8kS15Mo+2KZjahlknWs8+f7Rmwmrols6dLVujjOnr0dF/FgfDfOhpSa60ZudpLxxz56xT1UYBmU4f62nD6GqKdPhK9uy0OK9RmIWcNM/ZpMGVU6SEV9Darj+e0IOUYXJIAimMSCrKfXJquLO1bMcdLkEaI9ESZrN9SbrKxBdMqLBCzRV1WWW8dKSeTkXm2PBrHQu3Y31FArTVWcDXPNDEiRjkKCIGAYXpmkx6bnKWCRL0s3EjB0q8TI65S81rZgEwxhkk+bxL5aOrrIK69BSJ32rTbU4DrTOS8n4XboP4Pqw6TRemaposn86FhkSQU8PAa3TnoSqzVb7Qo+/5+oDXKppgLIJLLlDkYBJmXY+PZZWIpl6lh4qYBZ7J0JISdg3OYuSt+9U9Uy4EdgLddLqnhyqqwGmtMhpmkfho+QOx+nKeH/XV9m5bknmlCEYPqJtbQpQDklLbB+AeNGwhai27HFvPfz387SEcMe/FVw5w0= root@okd4-servicesNow edit “/opt/okd4/configs/install-config.yaml”. You will see at the end of this file a line:

sshKey: 'ssh-ed25519 AAAA…'Replace ‘ssh-ed25519 AAAA…’ with the contents of “/root/.ssh/id_rsa.pub” – thus putting your own ssh key.

Also look for:

pullSecret: '{"auths":{"fake":{"auth": "bar"}}}' Insert your pull secret you got from Red Hat earlier and replace “{“auths”:{“fake”:{“auth”: “bar”}}}”

Next create a folder “/opt/okd4/install_dir/” and ensure it is empty – so if you are repeating this step ensure you remove all files, especially hidden files.

Run the following to generate the Kubernetes manifests and ignition-configs, you may get some warnings which can be ignored:

cd /opt/okd4/install_dir/

cp /opt/okd4/configs/install-config.yaml /opt/okd4/install_dir/

openshift-install create manifests --dir=/opt/okd4/install_dir/

openshift-install create ignition-configs --dir=/opt/okd4/install_dir/Copy the install folder to Apache:

mkdir /var/www/html/okd4

cp -R /opt/okd4/install_dir/* /var/www/html/okd4/

chown -R apache: /var/www/html/

chmod -R 755 /var/www/html/Note the copying of files to Apache is recursive, this is because, for some reason, if you don’t include the sub-folder “auth” then the install of the control nodes, etc. will hang.

Check it is all available by going to Apache in a browser at http://192.168.205.150:8080/okd4/metadata.json

This should look like:

Now get the bare-metal bios image and sig files. Go to https://getfedora.org/en/coreos/download?tab=metal_virtualized&stream=stable

From here look for the Raw file download under “Bare Metal & Virtualized”:

Download the raw file, in my case “fedora-coreos-32.20200629.3.0-metal.x86_64.raw.xz” and put that on okd4-services in the folder “/opt/okd4/misc/”.

Next we need to get the signature file. For this click on the link on the same page “Verify signature & SHA256”, as in the earlier screenshot. This will bring up a dialog where you should click on “signature” to download the file in my case “fedora-coreos-32.20200629.3.0-metal.x86_64.raw.xz.sig” – again save on okd4-services in the folder “/opt/okd4/misc/”.

Now run the following to move it to Apache:

cd /opt/okd4/misc

cp fedora-coreos* /var/www/html/okd4/

chown -R apache: /var/www/html/

chmod -R 755 /var/www/html/OKD nodes

You need to download the Fedora CoreOS bare metal ISO. This is available on the same page that you got the raw file. As before go to https://getfedora.org/en/coreos/download?tab=metal_virtualized&stream=stable, but this time download the ISO as below.

Note that currently I am using Fedora 32 CoreOS. This is for multiple reasons which include a change to the default host name which is now ‘fedora’ rather than ‘localhost’, this breaks DHCP assigning a hostname which further breaks other things. The Fedora 32 ISO is not visible on the site. To get the F32 version get the URL to download the latest version and change the url to use “32.20200629.3.0” – this will mean changing the url in two places. So in my case the file is “fedora-coreos-32.20200629.3.0-live.x86_64.iso” which is https://builds.coreos.fedoraproject.org/prod/streams/stable/builds/32.20200629.3.0/x86_64/fedora-coreos-32.20200629.3.0-live.x86_64.iso

Create VMs for okd4-control-plane-1, okd4-bootstrap and okd4-compute-1 using the ISO you just downloaded and sizing from the table at the top of this post. Ensure you set the network adapter to “OKD VLAN20”

Start up both new VMs so we can get the MAC addresses. In my case my details were below – obviously you will get different MAC addresses:

| okd4-bootstrap | 00:0c:29:95:bf:4b |

| okd4-control-plane-1 | 00:0c:29:d9:0a:dc |

| okd4-compute-1 | 00:0c:29:dd:a3:93 |

Now you need to update the DHCP settings on okd4-services. Backup and edit the file “/etc/dhcp/dhcpd.conf”.

You will see at the bottom a section that looks like:

Static DNS Entry for master node

host okd4-control-plane-1 {

hardware ethernet 00:15:5D:3C:E3:06;

fixed-address 192.168.205.151;

}

Static DNS Entry for bootstrap nodes

host okd4-bootstrap {

hardware ethernet 00:15:5D:3C:E3:05;

fixed-address 192.168.205.152;

}You need to update the “hardware ethernet” to reflect the new MAC address.

Then you need to restart DHCP by running:

systemctl restart dhcpdNext restart both okd4-control-plane-1 and okd4-bootstrap and from each VM run “ifconfig” and verify they have got the correct IPs

okd4-bootstrap

For okd4-bootstrap you need to run the following from the VM console, you can’t ssh to the host and nor can you paste text into the VMRC:

curl -O http://192.168.205.150:8080/okd4/bootstrap.ign

sudo coreos-installer install /dev/sda --ignition-file ./bootstrap.ignThis should produce:

Now power off the VM and eject the ISO and restart.

If it restarts properly you should see:

The bootstrap node will restart once a couple of minutes after you power it up – this is expected.

While it is starting you may see errors for a few minutes but these will eventually settle down.

One error you might see, even once is up, is:

[ 1738.628935] SELinux: mount invalid. Same superblock, different security settings for (dev mqueue, type mqueue) [ 1763.433276] SELinux: mount invalid. Same superblock, different security settings for (dev mqueue, type mqueue)This relates to a known bug and seems unlikely to be fixed https://bugzilla.redhat.com/show_bug.cgi?id=1868057. This error will continue even once it is up.

If you watch the VMware monitoring for okd4-bootstrap during it’s initial startup you will see the activity spike over a few minutes and then settle down:

While you are doing okd4-bootstrap, but before you start on okd4-control-plane-1, you can run the following from okd4-services to show logging and the progress of the bootstrap:

export KUBECONFIG=/opt/okd4/install_dir/auth/kubeconfig

openshift-install --dir=/opt/okd4/install_dir/ wait-for bootstrap-complete --log-level=debugIf it works you should see:

[root@okd4-services ~]# openshift-install --dir=/opt/okd4/install_dir/ wait-for bootstrap-complete --log-level=debug

DEBUG OpenShift Installer 4.5.0-0.okd-2020-10-15-235428

DEBUG Built from commit 63200c80c431b8dbaa06c0cc13282d819bd7e5f8

INFO Waiting up to 20m0s for the Kubernetes API at https://api.lab.okd.local:6443…

INFO API v1.18.3 up

INFO Waiting up to 40m0s for bootstrapping to complete…The important thing to wait for is “INFO API v1.18.3 up”. You can now <ctrl>c to get out of this because it won’t be up until the control-plane is up. At least we know the bootstrap is ready

If you wait for the cpu activity to die down then this should return “INFO API v1.18.3 up” straight away.

Until you get the correct response don’t move to the next step because this means the bootstrap is not ready.

okd4-control-plane-1

The steps for the control plane are almost the same except use “master.ign”. So from the console of okd4-control-plane-1 run the following:

curl -O http://192.168.205.150:8080/okd4/master.ign

sudo coreos-installer install /dev/sda --ignition-file ./master.ignIf this goes well you should see:

As before power off the VM and remove the ISO and restart.

Unlike the startup for the bootstrap there may be a few errors but on the whole, if you have waited for the bootstrap to fully start, it should be pretty clean of errors and will look like:

As before the control-plane node will restart a few minutes after you power on the node.

Now run the same test you did for the bootstrap:

openshift-install --dir=/opt/okd4/install_dir/ wait-for bootstrap-complete --log-level=debugIf everything is working you should get the following back:

[root@okd4-services misc]# openshift-install --dir=/opt/okd4/install_dir/ wait-for bootstrap-complete --log-level=debug

DEBUG OpenShift Installer 4.5.0-0.okd-2020-10-15-235428

DEBUG Built from commit 63200c80c431b8dbaa06c0cc13282d819bd7e5f8

INFO Waiting up to 20m0s for the Kubernetes API at https://api.lab.okd.local:6443…

INFO API v1.18.3 up

INFO Waiting up to 40m0s for bootstrapping to complete…

DEBUG Bootstrap status: complete

INFO It is now safe to remove the bootstrap resources

DEBUG Time elapsed per stage:

DEBUG Bootstrap Complete: 4m46s

INFO Time elapsed: 4m46sNext we need to check the status of the cluster, so run the following on the services node:

export KUBECONFIG=/opt/okd4/install_dir/auth/kubeconfig

oc whoami

oc get nodesYou should get back the following, but if you don’t give it a few minutes:

Now get the cluster node details:

oc get clusteroperatorsThis may take a while to return details but it should return:

You will notice that in the beginning a lot of the operators have an “AVAILABLE” status of “False”. In particular you will notice the “AVAILABLE” status of “console” is “UNKNOWN”. This means the web console is not available. Because we also have a compute node some of the operators won’t come up yet, so move to setting up the compute node.

okd4-compute-1

Start up okd4-compute-1 and as with the other nodes run the following in the console, not the ignition file is worker.ign:

curl -O http://192.168.205.150:8080/okd4/worker.ign

sudo coreos-installer install /dev/sda --ignition-file ./worker.ignAgain power off and then power on the VM

The worker nodes won’t be added until it’s certificate requests are approved. Run the following:

oc get csr -o nameThis will initially return a couple of requests:

Also if you run “oc get nodes” you will see that the compute node is not listed:

Give it a bit more time until the compute node seems to be up and the CPU usage has dropped down and is flat and then run the command again and you should most likely get back 3 requests:

Now run the following to approve all requests, you might need to run it a couple of times:

oc get csr -ojson | jq -r '.items[] | select(.status == {} ) | .metadata.name' | xargs oc adm certificate approveNow check your nodes by running “oc get nodes” this should now return:

If you node still hasn’t show keep checking the CSRs and repeating the steps to approve them.

Take the bootstrap node out of the proxy by running the below on the services node:

sudo sed -i '/ okd4-bootstrap /s/^/#/' /etc/haproxy/haproxy.cfg

systemctl reload haproxyShutdown the okd4-bootstrap as it is no-longer needed. The best way to do this is to ssh to okd4-bootstrap, by running “ssh core@192.168.205.152” as root from the services node. Then issue:

sudo shutdown -h nowAgain rerun “oc get clusteroperators” and you should find all the operators are up. This is because some of the operators will run on worker node.

You should also remove the OKD files in Apache:

rm /var/www/html/okd4/auth/kubeadmin-password

rm /var/www/html/okd4/auth/kubeconfigLogin to OKD web console

You should now be able to login to the OKD web console. But first you need to get the OKD DNS server integrated into the DNS on your Windows desktop

Enabling OKD DNS on Windows desktop

To use OKD from a Windows desktop it is almost essential that the OKD DNS be used alongside the public DNS servers.

The OKD DNS server has been setup to forward to public DNS servers including Google’s 8.8.8.8 DNS server. Thus if you only used the OKD DNS server then DNS would work. The problem is if the OKD DNS comes down for some reason, as may be the case because OKD is a development instance.

The answer to to define an order to DNS server with the OKD DNS being the top one.

Steps

From “Network and Sharing Centre” in the Control Panel select “Change adapter settings”:

From the resulting dialog right click on the appropriate adaptor and select “Properties”. From this properties dialog double click on “Internet Protocol Version 4 (TCP/IPv4)”. This brings up the “Internet Protocol Version 4 (TCP/IPv) Properties”, from this click on “Advanced”:

From the dialog, “Advanced TCP/IP Settings” select the “DNS” tab and add the OKD DNS server at the top of the list, in my case 192.168.205.150:

How it works

Once this is enabled DNS calls will go to the OKD DNS and if no response is received then it will go to Google.

If the OKD DNS for some reason stops responding then for next 10 minutes (based on my testing) Windows will use the Google DNS server. After that 10 minutes it will retry the OKD DNS.

This means under this configuration that if you stop the OKD DNS server then you will need to wait 10 minutes, after the time you restarted the OKD DNS server, before Windows can resolve OKD names/urls – be patient!

If you are having problems connecting to the OKD DNS server then please have a look at the post Test DNS connectivity

Login

Login to OKD https://console-openshift-console.apps.lab.okd.local

You should login as “kubeadmin”

The password is the contents of the file “/opt/okd4/install_dir/auth/kubeadmin-password” on the services node.

Finishing off tasks

Configuring registry storage

Be careful and maybe backup

I say this because I had quite a bit of trouble getting this working , including trying to do it via the web console. To get to this point you have done a lot of configs and changes, you might want to consider shutting everything down and backing up the full set 🙂

This section is based on both Craigs docs and the OKD 4.5 documentation:

Configuring the registry for vSphere

https://docs.openshift.com/container-platform/4.5/registry/configuring_registry_storage/configuring-registry-storage-vsphere.html

Don’t have any NFS shares mapped to Windows. When I was having lots of trouble with this one of the things I had done is mount the NFS share on my Windows 10 desktop. I removed this and rebooted my desktop prior the last successfull setup of the image registry storage. I don’t know if having it mounted was a problem, but there is a very small chance it was part of the problem, maybe….

First check you don’t have a registry pod by running:

oc get pod -n openshift-image-registry

Note above there is not an entry “image-registry”

We need to create persistent storage for the registry, using the NFS share we setup earlier, and then create the registry. So run the following to create a folder for the registry:

mkdir -p /srv/nfsshares/registry

chown -R nobody:nobody /srv/nfsshares/registry

chmod -R 2777 /srv/nfsshares/registryNow create a persistent volume:

export KUBECONFIG=/opt/okd4/install_dir/auth/kubeconfig

cd /opt/okd4/configs/

oc create -f registry_pv.yamlNow run:

oc patch configs.imageregistry.operator.openshift.io cluster --type merge --patch '{"spec":{"managementState":"Managed"}}'Now we need to edit the registry configuration so run the following to open up the config in vi:

oc edit configs.imageregistry.operator.openshift.ioLook for a section:

Edit this so the “{}” are removed and it looks like:

Now if you look if you have a image registry pod it has an extra line:

Importantly when you look at the persistent volume claims you will see that “image-registry-storage” persistent volume claim is bound to our persistent volume “registry-pv” that we created from “

registry_pv.yaml".

Enable the Image Registry default route

By default the registry that comes with this install is not exposed so to enable the default route run the following patch:

oc patch configs.imageregistry.operator.openshift.io/cluster --type merge -p '{"spec":{"defaultRoute":true}}'Make control plane schedulable

We want to make it so the control plane functions as master and worker. By default if you have only a master it will be “master,worker”. However if you define workers then the control plane will only be “master”.

This is how to make it schedulable and consequently run containers/pods:

Login to the web console as an admin and go to “Administration | Cluster Settings | Global Configuration”:

Then select “Scheduler”:

Select “YAML” and find a line that says “mastersSchedulable: false”, as below. Change the “false” to “true” and save

Looking in “Compute | Nodes” you will see that the control node, okd4-control-plane-1, now has a role “master,worker” – previously it was just “master”.

Adding HTpasswd authentication

OKD does not appear to contain a database of usernames and passwords rather you get an out of the box user “kubeadmin” as a temp admin.

Beyond kubeadmin you need to specify a Identity provider, IDP, which OKD can authenticate against. The mechanisms available include “HTPasswd”, “LDAP”, “GitHub” and “Google” amongst others. Because this is a home lab setup lets go for the simplest to setup “htpasswd”.

Long story short, you create a standard Apache htpasswd file and upload it to OKD. I have included an htpasswd file with the configs called “zoyinc_okd4_lab_users.htpasswd”.

I created this htpasswd file on the services host as we have already installed Apache on it. I ran the following:

cd /opt/okd4/configs/

htpasswd -c -B -b zoyinc_okd4_lab_users.htpasswd admin cool4School

htpasswd -B -b zoyinc_okd4_lab_users.htpasswd zoyinc 2beG8always

htpasswd -B -b zoyinc_okd4_lab_users.htpasswd test1 37TestPlans

htpasswd -B -b zoyinc_okd4_lab_users.htpasswd test2 37TestPlansAs you can see this created 4 test users:

| admin | cool4School |

| zoyinc | 2beG8always |

| test1 | 37TestPlans |

| test2 | 37TestPlans |

The htpasswd file only authenticates the users it does nothing in regards to those users rights or roles

Login to the web console and as you did earlier go to “Administration | Cluster Settings | Global Configuration” but this time open up “OAuths”.

From here, at the bottom from “Identity Providers” click on the “Add” dropdown:

Select your htpasswd file or the one I provided and click on “Add”:

Open up a new browser in a private window, or cognito with Chrome, and from the login screen ensure you select “htpasswd”:

Login with one of the test users in your htpasswd file – for me I logged in as “admin”

At this point you will be able to login but you won’t see anything:

You will also see the user now shows up under “User Management”:

From “User Management | Groups” click on “Create Group” and create one that looks like:

apiVersion: user.openshift.io/v1

kind: Group

metadata:

name: okd 4 lab administrators

users:

- adminNote all names should be in lower case. This is because while caps is allowed in other places it will do a “.lower()”, so it is just easier to keep everything lower case.

The “name” is completely arbitrary and under “users” is our user “admin”. Click on “Create to create the group”.

Now we need to bind it to a role so select Role Bindings

Note the role, for the admin user, should probably be “cluster admin”

Also create a binding called admin-rb because just assigning “cluster admin” seems to miss out some pieces of functionality

Now create a new group for basic developer rights, follow the same steps you did for admins. The YAML should look like:

apiVersion: user.openshift.io/v1

kind: Group

metadata:

name: okd 4 lab developers

users:

- zoyinc

- test1

- test2Now create a role binding in a similar way you did for admin:

Not upgradable

I was very keen to get 4.6 working and as I mentioned this didn’t work very well as a clean install. I was then thinking to install 4.5 and upgrade to 4.6. I was heartened to see:

The long story short is the single node installs are not upgradeable. I believe with a standard setup it upgrades one master and then another and this is not possible with a single node setup.

When you go through the process to upgrade you eventually get the bad news:

Post install options

Some possible post deployment things you could do:

Add catalog sources to OperatorHub

This describes enabling all of the Red Hat OperatorHub sources. It also describes adding a new source, OperatorHub.io.

Troubleshooting etc.

SSH to nodes

It is really helpful to be able to login to the services, bootstrap and control plane nodes for diagnostic purposes.

For the services node, since it is a regular Fedora server you can just ssh as normal. For the other nodes that are created via OKD it is slightly trickier.

When you modified “install-config.yaml” to include an ssh public key this was replicated to the bootstrap and control plan nodes. The default user for these nodes is “core” and dhen you deploy the cluster, the key is added to the core user’s ~/.ssh/authorized_keys list.

The result is that for my setup, because I did everything as root, you can login to the services host as root and then ssh to the bootstrap using:

ssh core@192.168.205.152

Shutting down nodes

In most cases the easiest way to shutodown nodes is to ssh to the node as described above and then run:

sudo shutdown -h now

Also see Red Hat: How To: Stop and start a production OpenShift Cluster

Get bootstrap logs

From the services VM you can run the following to download the logs from both the bootstrap and control-plane nodes:

openshift-install gather bootstrap --bootstrap 192.168.205.152 --master 192.168.205.151You can see in the above that you need to specify the bootstrap and control-plane/master nodes. For more details on options run:

openshift-install gather bootstrap --helpWhen it completes you should get:

[root@okd4-services misc]# openshift-install gather bootstrap --bootstrap 192.168.205.152 --master 192.168.205.151

INFO Pulling debug logs from the bootstrap machine

INFO Bootstrap gather logs captured here "/opt/okd4/misc/log-bundle-20210106145707.tar.gz"The resultant zip file is on the services VM. When you extract the gzip file it will look like:

If you think that is an overwhelming list of log files, then I completely agree with you. I really struggled to find where to look. So, for example, when I was trying to figure out what was going on when I was trying to diagnose the below error:

openshift-samples import error:

Cluster operator openshift-samples Degraded is True with FailedImageImports: Samples installed at 4.5.0-0.okd-2020-10-15-235428, with image import failures for these imagestreams: nodejs jboss-eap64-openshift redhat-sso70-openshift jboss-fuse70-console mysql redhat-sso71-openshift dotnet-runtime jboss-amq-63 fuse-apicurito-generator dotnet jboss-webserver31-tomcat7-openshift jboss-datagrid65-openshift jboss-fuse70-karaf-openshift openjdk-8-rhel8 jboss-datagrid73-openshift apicurito-ui sso74-openshift-rhel8 jboss-decisionserver64-openshift ruby jboss-fuse70-java-openshift openjdk-11-rhel8 fuse7-java-openshift jboss-webserver30-tomcat7-openshift rhdm-kieserver-rhel8 redis java eap-cd-runtime-openshift openjdk-11-rhel7 rhpam-businesscentral-rhel8 mariadb redhat-sso72-openshift fis-java-openshift fuse7-eap-openshift golang jboss-fuse70-eap-openshift apicast-gateway fuse7-console rhdm-decisioncentral-rhel8 jboss-datagrid72-openshift jboss-datagrid71-openshift fuse7-karaf-openshift httpd python mongodb rhpam-kieserver-rhel8 jboss-webserver31-tomcat8-openshift jboss-datagrid65-client-openshift rhpam-businesscentral-monitoring-rhel8 jboss-eap71-openshift jboss-eap70-openshift perl postgresql nginx fis-karaf-openshift redhat-sso73-openshift jboss-eap72-openshift redhat-openjdk18-openshift jboss-datavirt64-driver-openshift jboss-webserver30-tomcat8-openshift rhpam-smartrouter-rhel8 eap-cd-openshift jboss-processserver64-openshift jboss-webserver50-tomcat9-openshift jboss-datagrid71-client-openshift jboss-amq-62 jboss-datavirt64-openshift php ; last import attempt 2021-01-06 01:48:04 +0000 UTCI took one of the images, “jboss-fuse70-console”, and greped the entire bootstrap log files for it. This brought back a number of hits and from that I could see what the problem was.

Date time problems – NTP and DNS

You can find yourself in a weird catch 22 space if your date/time is well out.

It seems the DNS forwarders I use, aka Google 8.8.8.8, don’t respond if your date is significantly out.

Additionally in your NTP settings, in “/etc/chrony.conf”, you would typically specify the NTP servers using DNS names similarly to:

server 0.pool.ntp.org

server 1.pool.ntp.org

server 2.pool.ntp.org

server 3.pool.ntp.orgThus you have a vicious circle because to get the correct time from a NTP server you need to resolve the DNS names but that doesn’t work because the time is significantly out.

To fix this you could set the date manually or include a static IP in the list of “server” in the chrony.conf file.

Useful URLs

Troubleshooting Bootstrap Failures

https://github.com/openshift/installer/blob/master/docs/user/troubleshootingbootstrap.md

Unfortunately, there will always be some cases where OpenShift fails to install properly. In these events, it is helpful to understand the likely failure modes as well as how to troubleshoot the failure.

OKD 4.5 Single Node Cluster on Windows 10 using Hyper-V

https://laptrinhx.com/okd-4-5-single-node-cluster-on-windows-10-using-hyper-v-3721419958/

Craig Robinson’s much read and linked to, guide to installing a 4.5 cluster. This post is based firstly on this version, for Windows, which I massaged to used for ESX before I discovered he had one for ESX also. Great work and thanks Craig.

Guide: OKD 4.5 Single Node Cluster (ESXi)

https://medium.com/swlh/guide-okd-4-5-single-node-cluster-832693cb752b

From Craig Robinson:

After listening to some feedback in the chat on a recent Twitch stream and the okd-wg mailing list I decided to create a guide for installing an OKD 4.5 SNC (single node cluster). This guide will use no worker nodes and only a single control-plane node with the goal of reducing the total amount of resources needed to get OKD up and running for a test drive.

Deploying Openshift/OKD 4.5 on Proxmox VE Homelab (Ross Brigoli)

https://blog.rossbrigoli.com/2020/11/running-openshift-at-home-part-44.html

A really good set of instructions, very similar in nature to Craigs but this guy has included pages about his impressive hardware setup. I suspect the guides are similar because the process is somewhat standard, maybe.

Frequently Asked Questions (OKD)

https://github.com/openshift/okd/blob/master/FAQ.md#can-i-run-a-single-node-cluster

OKD frequently asked questions

Sample install-config.yaml file for bare metal

https://docs.okd.io/latest/installing/installing_bare_metal/installing-bare-metal.html#installation-bare-metal-config-yaml_installing-bare-metal

This describes the install-config.yaml file and describes what each property is for.

OKD 4 Single Node Cluster

https://cgruver.github.io/okd4-single-node-cluster/

This is a guide about “Building an OKD4 single node cluster with minimal resources”. It is by Charro Gruver, aka “cgruver”. It is completely different to Craig Robinson’s guide, though they are tackling the same task so lots of cross-over. This has automation shell script that allow for custom setup.

OKD 4 Web Console: Accessing the web console

https://docs.okd.io/latest/web_console/web-console.html

OKD4.9: Preparing to install on a single node

https://docs.openshift.com/container-platform/4.9/installing/installing_sno/install-sno-preparing-to-install-sno.html